AI systems are now part of most testing workflows, from generating test cases to evaluating behavior across complex applications. As teams rely more on testing AI tools, understanding how AI reaches its decisions becomes just as important as the result itself. Explainable AI methods give development and QA teams a way to inspect, validate, and trust AI-driven behavior instead of treating it as a black box.

For software teams, explainability turns AI into something that can be tested with intent, purpose, and to save time. ContextQA supports this shift by helping teams observe AI-driven flows, compare behavior across releases, and identify patterns that point to deeper issues rather than surface-level failures.

What Are Explainable AI Methods?

So, what is explainable AI? These methods are techniques that make AI decisions easier to understand. Instead of returning only an output, these methods show which inputs, rules, or signals influenced the result. In testing, this means QA teams can see why a test passed or failed, not just that it did.

These methods are especially useful when AI affects user access, risk decisions, recommendations, or automated actions. Testing teams use explainable AI methods to confirm that behavior matches expectations and remains stable as systems change.

Do Explainable AI Methods Matter for Testing?

AI behavior can shift as data changes or models are updated. Without explainability, these shifts are difficult to detect early. Explainable methods give testers clearer signals when behavior testing starts to drift.

From a QA perspective, this improves things like:

- failure investigation

- regression confidence

- audit readiness

- communication with developers

ContextQA helps teams apply explainability in root cause analysis by capturing AI-driven behavior within end-to-end flows and highlighting changes that affect outcomes or reasoning.

Common Explainable AI Methods Used in Testing

Explainable AI methods vary depending on how models are built and used. Testing teams often encounter several of these approaches in real systems.

Feature attribution methods

These methods show which inputs influenced a decision and by how much. In testing, this helps teams verify that the correct data points are driving outcomes.

Rule-based explanations

Some systems expose logic paths or rules that lead to a decision. Tests confirm that rules trigger correctly under different conditions.

Counterfactual explanations

These explain what would need to change for a different outcome to occur. QA teams use these to validate boundary conditions and edge cases.

Model transparency outputs

Certain models expose internal states or confidence scores. Testing teams check that these values stay within expected ranges across runs.

Each method gives testers more visibility into how AI behaves under different scenarios.

How Testing Teams Use Explainable AI Methods

Explainable AI methods fit naturally into modern testing workflows. Teams use them to validate behavior across user journeys, data inputs, and system updates.

Testing often includes:

- running flows with varied data

- reviewing decision outputs

- validating explanation fields

- comparing behavior across versions

- confirming downstream system responses

ContextQA supports this work by allowing teams to build reusable test models that include AI outputs and explanations together. Using AI in software testing helps teams spot meaningful changes faster.

Explainable AI Methods and Compliance

In regulated environments like fintech, legal services and healthcare, explainability is often required. Systems that make automated decisions must be auditable and understandable. Testing teams play a key role in confirming that explanations are present, accurate, and consistent.

Explainable AI methods help teams test:

- decision transparency

- explanation accuracy

- stability across environments

- alignment with business rules

ContextQA helps teams capture this behavior as part of full test flows, making compliance checks easier to repeat and review.

Benefits for Automation and Regression Testing

Explainable AI methods strengthen test automation by reducing guesswork. When a test fails, testers can see why rather than manually tracing behavior.

This improves:

- regression coverage

- failure triage speed

- test reliability

- confidence during releases

ContextQA supports these benefits by linking explainable outputs to automated test runs so teams can track behavior changes over time. Using intelligent, verified automation is one of the best ways to improve overall testing speed and accuracy.

Explainable AI Methods in Real Products

Explainable AI methods appear across many systems teams test today, in multiple industries and sectors.

- Ecommerce platforms explain recommendations and fraud alerts

- Financial systems explain risk scores and transaction decisions

- Healthcare systems explain alerts and prioritization

- Internal tools explain automated workflow decisions

Testing teams rely on explainability to ensure (for clients, customers and stakeholders alike) that these systems behave consistently and responsibly.

Let’s get your QA moving

See how ContextQA’s agentic AI platform keeps testing clear, fast, and in sync with your releases.

Book a demoHow ContextQA Supports Explainable AI Testing

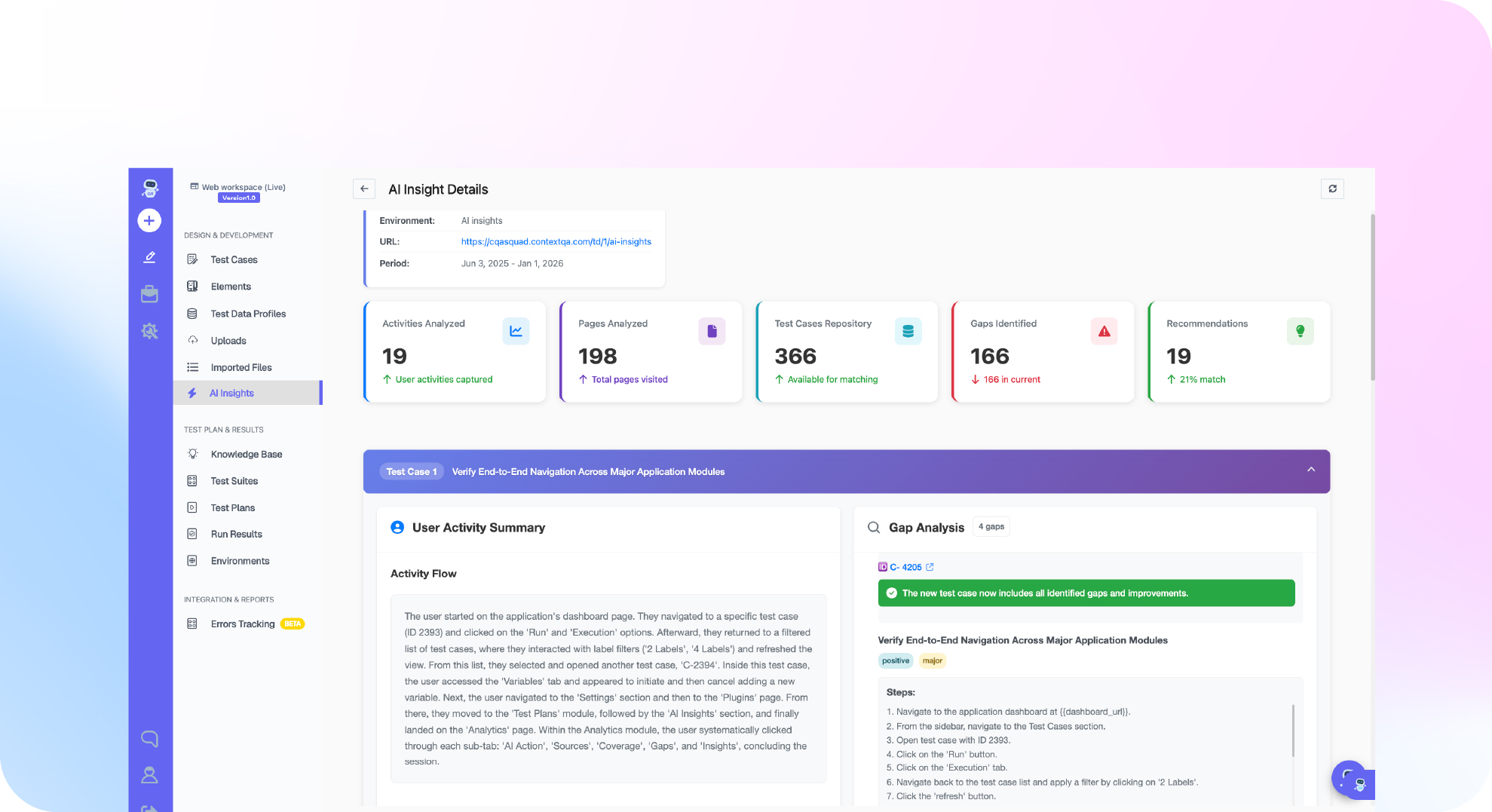

ContextQA’s AI features help teams test explainable AI by capturing AI-driven decisions as part of real user flows. Tests include both outcomes and the explanations behind them. When behavior changes, teams see exactly where and why.

This approach helps teams move beyond surface-level checks and validate AI behavior in a way that scales with product complexity. From improved AI prompt engineering practices to mobile test automation and more, you’ll be able to build a customized AI platform that helps testing go faster and more smoothly.

Conclusion

Explainable AI methods give testing teams a clearer way to understand and validate AI behavior. By exposing how decisions are made, these methods reduce uncertainty, support compliance, and make failures easier to diagnose. Modern testing relies on this visibility to keep AI-driven systems reliable over time.

ContextQA supports this approach by capturing explainable behavior inside automated workflows, helping teams test AI systems with clarity rather than guesswork.

Book a demo of ContextQA to see a customisable explainable AI tool in action and try it for yourself.