Your AI Accessibility Tools Just Got a Serious Upgrade

Trusted by leading engineering and QA teams

Stronger prompts lead to stronger tests.

Faster triage

Your Shortcut to Fast, Compliant UX

One Simple Score for Your User Experience Quality

Smarter Testing Means Lighter Workloads

Test Both Without Switching Tools

Deliver Speed That Includes All Users

Cut Maintenance Across Teams

Stay Compliant Without Extra Effort

Scale Coverage Across Your Entire Application

The Engine Behind Speed and Quality

Realistic Load Simulation

Performance tests simulate concurrent users, network throttling, and device-specific constraints to measure real-world responsiveness and stability.

Compliance Scanning in Context

Accessibility checks evaluate WCAG standards, ARIA implementation, color contrast, focus management, and keyboard navigation while pages are under load.

AI-Powered Issue Prioritization

AI accessibility tools analyze both performance bottlenecks and accessibility violations, ranking them by severity, user impact, and regulatory risk.

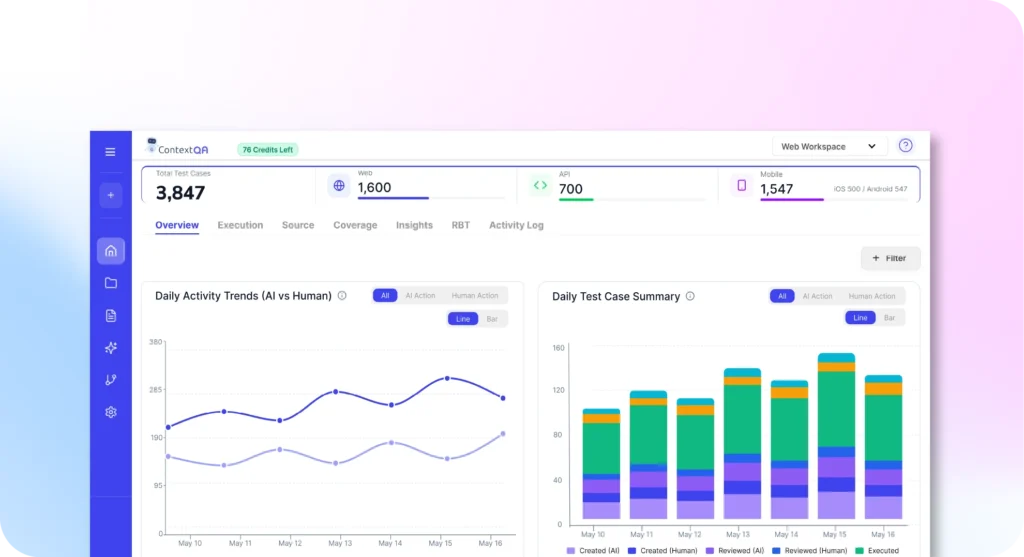

Single Unified Report

Performance metrics, accessibility findings, link health status, and UXQ scores appear in one dashboard. Teams see the complete picture without switching views.

Maintain Quality as Fast as You Move

ContextQA gives teams early signals during development and ongoing, so they’re always one step ahead.

Shift-Left Testing

Combined performance and accessibility tests run at pull request and build time, surfacing issues before merge. Developers get immediate feedback on both speed regressions and compliance gaps.

Shift-Right Monitoring

Continuous validation tracks performance drift and accessibility regressions in production. Real-world usage patterns feed back into test suites, keeping validation aligned with actual user behavior.

Closed-Loop Validation

Signals from production monitoring trigger automated test updates, creating a self-improving testing cycle that adapts to your application's evolution.

Your Quality Gets Smarter, but Workflow Stays the Same

Automated CI/CD checks

Continuous production insight

AI-driven test maintenance

Release protection built in

Your Full Suite of AI accessibility tools

| No-code scenario design | Realistic traffic simulation | Automated accessibility scanning |

|---|---|---|

| Create load tests and accessibility checks through a visual interface, so non-technical team members can contribute to test coverage without writing scripts | Generate concurrent user loads and network throttling patterns that mirror production conditions, giving teams accurate performance baselines | Run WCAG, ARIA, and contrast checks across entire user flows automatically, catching compliance gaps without manual reviews |

| AI-generated remediation | Link health monitoring | |

|---|---|---|

| Get structured fix suggestions with implementation details for every violation, turning hours of research into actionable next steps | Detect and flag broken navigation, redirect loops, and dead endpoints before they impact users or search rankings |

How Different Teams Use Unified Testing

QA & SRE Teams

QA and SRE teams run unified tests that surface slowdowns, stability issues, and accessibility violations in one pass thanks to prioritized signals.

Front-End Engineering

Front-end engineers trigger checks for layout shifts, contrast violations, ARIA gaps, and load-time regressions with every UI update, seeing exactly how to fix changes in their workflow.

Product & UX Teams

Product and UX teams track how user journeys perform across touchpoints, giving them insights into maintaining experience quality at scale.

DevOps & Release Teams

DevOps teams configure pipelines to run performance and compliance checks automatically, thanks to flagged failed builds before deployment.

Compliance & Legal Teams

Compliance and legal teams generate audit-ready reports that map accessibility violations to specific pages, components, and commits.

SEO & Web Operations Teams

SEO and web operations teams use link health monitoring to catch broken navigation and redirect loops before they damage search rankings, boosting Core Web Vitals scores and site reliability.