Table of Contents

TL;DR: Chaos engineering is the practice of deliberately injecting failures into a system to test how it responds, recovers, and maintains service quality. Netflix pioneered the approach with Chaos Monkey in 2011, and it has since been adopted as standard practice for cloud-native applications. The Principles of Chaos Engineering define it as “the discipline of experimenting on a system to build confidence in the system’s capability to withstand turbulent conditions in production.” For QA teams, chaos engineering reveals bugs that functional tests never find: the hidden dependencies, missing fallbacks, and broken recovery mechanisms that only surface when something actually fails.

Definition: Chaos Engineering The practice of experimenting on a software system by deliberately introducing controlled failures (such as server crashes, network latency, disk exhaustion, or service outages) to verify that the system’s resilience mechanisms work correctly. The Principles of Chaos Engineering define the core process: form a hypothesis about how the system should behave during a failure, inject the failure in a controlled manner, observe the results, and improve the system based on what you learn. Unlike traditional testing which verifies that things work correctly, chaos engineering verifies that things fail safely.

Here is a question I want you to answer honestly: do you know what happens to your application when a database server goes down?

Not what should happen. Not what the architecture diagram says will happen. What actually happens. Right now. In production.

Most teams cannot answer this question with confidence. They have auto-scaling configured. They have failover mechanisms in place. They have health checks and circuit breakers. But they have never actually tested whether those mechanisms work when things go wrong in production.

That is exactly the gap chaos engineering fills. It does not test whether your application works. It tests whether your application survives.

Netflix learned this lesson in 2011 when they moved to AWS. They realized that in a cloud environment, servers fail constantly. Instead of trying to prevent every failure (impossible), they decided to assume failure and build systems that handle it. They created Chaos Monkey, a tool that randomly terminates production servers during business hours. If Netflix can still stream movies while servers are dying, their architecture is resilient.

The approach worked so well that Netflix expanded it into the Simian Army: Chaos Gorilla (simulates entire availability zone failures), Latency Monkey (introduces network delays), and Chaos Kong (simulates entire region outages). During a real DynamoDB outage in 2015, Netflix experienced less downtime than other AWS customers because they had already tested their system’s response to exactly that type of failure.

ContextQA’s performance testing validates system behavior under load, but chaos engineering goes further: it validates behavior under failure. The combination of performance testing and chaos engineering gives QA teams confidence that their application handles both traffic spikes and infrastructure failures.

Quick Answers:

What is chaos engineering? Chaos engineering deliberately injects failures (server crashes, network latency, service outages) into a system to verify that resilience mechanisms work. It tests what happens when things go wrong, not whether features work correctly. The goal is building confidence that the system can withstand real-world turbulent conditions.

Why do QA teams need chaos engineering? Functional tests verify that features work. Performance tests verify that features work under load. Chaos tests verify that the system still works when infrastructure fails. Without chaos engineering, you discover broken fallbacks and missing recovery mechanisms during real incidents, not before them.

Is chaos engineering safe for production? Yes, when done correctly. Start with small, controlled experiments (increase latency on one service, not terminate all servers). Use tools with built-in safety controls (blast radius limits, automatic rollback). Gremlin provides engineer-driven experiments with controlled blast radius and halt conditions.

The Five Types of Chaos Experiments for QA Teams

1. Infrastructure Failures

What you inject: Server termination, CPU exhaustion, memory pressure, disk full conditions.

What you learn: Whether auto-scaling works, whether health checks detect failures, whether load balancers route traffic away from unhealthy instances.

QA relevance: These failures affect every test environment. If your staging server runs out of disk during a test run, does your CI/CD pipeline detect it and report the correct failure? Or does it report false test failures that waste investigation time?

2. Network Failures

What you inject: Latency spikes, packet loss, DNS failures, connection timeouts.

What you learn: Whether your application handles slow responses gracefully, whether timeout configurations are correct, whether retry logic works without creating cascading failures.

QA relevance: Many flaky tests are caused by network issues, not code bugs. Chaos experiments that inject latency into test environments help QA teams distinguish between real bugs and network-induced test failures. ContextQA’s root cause analysis classifies failures by type, separating environment issues from code defects.

3. Service Dependency Failures

What you inject: Terminate a downstream service, return error responses from an API, slow down a database.

What you learn: Whether circuit breakers trip correctly, whether fallback responses are served, whether the user experience degrades gracefully instead of crashing entirely.

QA relevance: When your application depends on 10 external services, any of them can fail at any time. Contract testing (which validates API structure) does not test what happens when the API is completely unavailable. Chaos testing fills that gap.

4. State and Data Failures

What you inject: Database failover, cache invalidation, stale data, data corruption.

What you learn: Whether database replication works, whether cache recovery is transparent to users, whether the application handles stale data without incorrect behavior.

QA relevance: ContextQA’s database testing validates data integrity under normal conditions. Chaos experiments add the “what if” layer: what if the primary database fails over to the replica? Is the data still consistent?

5. Application-Level Failures

What you inject: Memory leaks, thread pool exhaustion, exception handling failures, deployment rollback.

What you learn: Whether monitoring detects the problem, whether alerts fire correctly, whether runbooks provide the right remediation steps.

QA relevance: Application-level chaos experiments validate the observability stack that your QA team depends on. If monitoring does not detect a memory leak during a chaos experiment, it will not detect one in production either.

| Experiment Type | Inject | Validates | Tools |

| Infrastructure | Server termination, CPU/memory | Auto-scaling, health checks, load balancing | Gremlin, Chaos Monkey, AWS FIS |

| Network | Latency, packet loss, DNS failure | Timeouts, retries, circuit breakers | Gremlin, Chaos Mesh, tc (Linux) |

| Service dependency | API unavailability, slow responses | Fallbacks, graceful degradation | Gremlin, LitmusChaos |

| State/Data | DB failover, cache eviction | Replication, data consistency | Gremlin, custom scripts |

| Application | Memory leak, thread exhaustion | Monitoring, alerting, runbooks | Gremlin, stress-ng |

How to Start Chaos Engineering in Your QA Team

Chaos engineering does not mean randomly breaking production on day one. Here is the practical path for QA teams.

Phase 1: Hypothesize (Week 1)

Start by documenting what you believe should happen when specific components fail. Example hypotheses:

- “If the payment service becomes unavailable, users see a friendly error message and their cart is preserved.”

- “If database latency increases to 5 seconds, the application returns cached data instead of timing out.”

- “If a web server is terminated, the load balancer routes traffic to healthy servers within 10 seconds.”

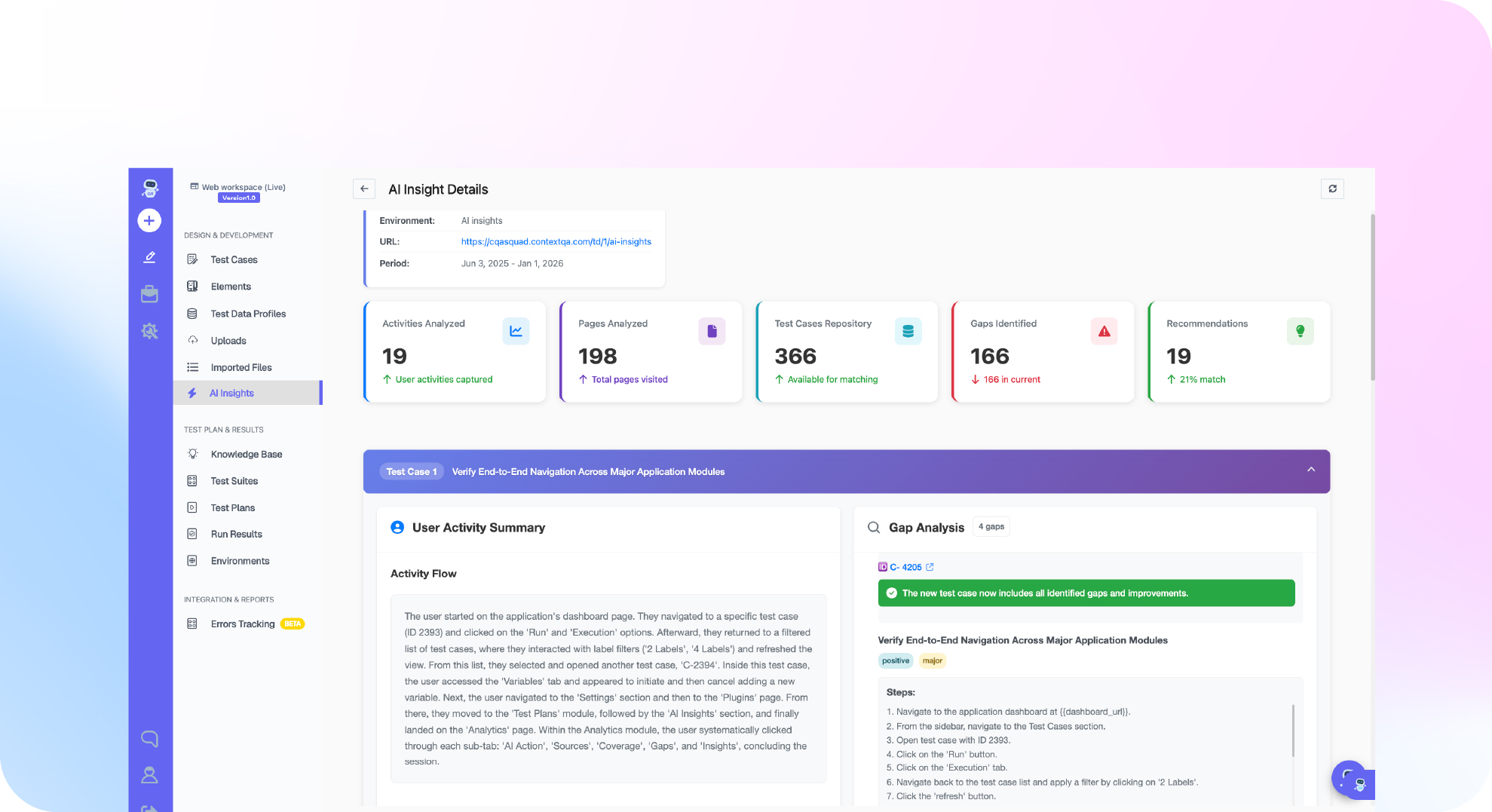

These hypotheses come from your architecture documentation, runbooks, and engineering team knowledge. ContextQA’s AI insights can help identify which components have the highest failure risk based on historical test and monitoring data.

Phase 2: Experiment in Staging (Weeks 2 to 4)

Run your first chaos experiments in a non-production environment. Use a tool like Gremlin (enterprise platform with safety controls) or LitmusChaos (open source, Kubernetes-native).

Start small. Increase latency on one service by 200ms. Observe: does the application degrade gracefully? Do health checks detect the issue? Do alerts fire? Compare actual behavior against your hypothesis.

ContextQA’s web automation can run E2E tests during chaos experiments to measure user-visible impact. If your checkout flow breaks when the inventory service is slow by 200ms, you have found a resilience gap before production users find it.

Phase 3: Integrate into CI/CD (Month 2)

Add chaos experiments to your pre-deployment pipeline. Before promoting a build to production, run a set of standard resilience checks: inject latency, terminate a service instance, and verify that the application handles it correctly.

ContextQA’s digital AI continuous testing runs automated checks continuously through all integrations (Jenkins, GitHub Actions, GitLab CI). Adding chaos experiments to this pipeline ensures every release is validated for both functionality and resilience.

Phase 4: Game Days in Production (Month 3+)

Once your staging experiments are passing consistently, graduate to production. Start with low-risk experiments during low-traffic hours. Monitor closely. Have rollback procedures ready.

The goal: build confidence that your production environment can handle the same failures your staging environment handles.

Chaos Engineering vs Performance Testing vs Functional Testing

QA teams often ask how chaos engineering fits alongside their existing test types. Here is the practical distinction.

| Dimension | Functional Testing | Performance Testing | Chaos Engineering |

| Question answered | Does the feature work correctly? | Does it work under load? | Does the system survive failure? |

| What you inject | User inputs and actions | Traffic volume and concurrency | Infrastructure failures and degraded conditions |

| When it catches bugs | Before deployment | Before production load | Before production incidents |

| Test environment | Any environment | Production-like environment | Staging first, then production |

| ContextQA feature | Web automation, API testing | Performance testing | Performance + monitoring integration |

The real power comes from combining all three. Consider this scenario: your checkout flow passes all functional tests. It handles 10,000 concurrent users in performance testing. But what happens when 10,000 users hit the checkout flow while the payment service has 500ms of added latency? That compound scenario (load + degraded dependency) is where real production incidents happen. Neither functional testing nor performance testing alone would find this bug. Chaos engineering with concurrent load testing reveals it.

ContextQA’s digital AI continuous testing can run automated functional tests during chaos experiments, providing a unified view of both feature correctness and system resilience. The AI insights and analytics dashboard tracks how test results change during degraded conditions, revealing which user flows are most sensitive to infrastructure instability.

This is why organizations like Netflix, Amazon, Google, and Microsoft practice chaos engineering alongside traditional QA, not instead of it. Functional testing proves your code works. Performance testing proves it scales. Chaos engineering proves it survives.

Original Proof: ContextQA and System Resilience

ContextQA’s platform contributes to system resilience testing at multiple levels.

The IBM ContextQA case study documents the elimination of test flakiness, which is often caused by the same infrastructure instability that chaos engineering tests for. Self-healing tests through AI-based self healing ensure that test failures represent real bugs, not environmental noise.

G2 verified reviews show 50% regression time reduction. Part of that reduction comes from eliminating false failures caused by unstable test environments. When ContextQA’s root cause analysis classifies a failure as “environment issue” rather than “code defect,” QA teams save 30 to 90 minutes per false failure on investigation.

ContextQA’s performance testing establishes baselines that chaos experiments validate. If your performance baseline shows 200ms response time under normal load, a chaos experiment that injects 100ms of latency should result in 300ms response time. If it results in 5 seconds (or a timeout), your system’s latency tolerance is worse than expected.

The security testing module adds another resilience dimension: security chaos. What happens when your application receives malformed input during a period of high latency? Does the security layer still function? These compound failure scenarios are where real-world incidents happen.

Deep Barot, CEO and Founder of ContextQA, built the platform to connect testing with reliability engineering, recognizing that quality and resilience are inseparable. The IBM Build partnership validates this approach at enterprise scale.

Limitations and Honest Tradeoffs

Chaos engineering does not replace functional testing. Chaos validates resilience. Functional tests validate correctness. You need both. A system that recovers gracefully from a server failure but calculates prices incorrectly has two problems, not one.

Starting in production requires maturity. Do not run chaos experiments in production until you have observability (monitoring, alerting, logging), rollback procedures, and staging results that give you confidence. Starting before you are ready creates real incidents instead of controlled experiments.

Not every system is ready. Legacy monoliths without health checks, circuit breakers, or auto-scaling will fail every chaos experiment. That is valuable information (it tells you exactly where your resilience gaps are), but you need a remediation plan before the experiments, not after. Start by adding basic health checks and monitoring, then run experiments to validate they work as expected.

Cultural resistance is real. “Why would we deliberately break things?” is a common objection from leadership and engineering teams alike. The answer: because things break on their own, every single day. Server processes crash. Network connections time out. Disk space runs out. Cloud provider APIs return errors. These failures happen whether you test for them or not. Chaos engineering lets you choose when, where, and how failures occur, rather than discovering them at 3 AM during an incident when your on-call engineer is groggy and your customers are frustrated. The teams that practice chaos engineering consistently report fewer production incidents, shorter recovery times, and lower stress during outages because they have already rehearsed the response.

Do This Now Checklist

- List your application’s top 5 dependencies (10 min). Database, cache, payment gateway, email service, authentication provider. Those are your first chaos experiment candidates.

- Write 3 resilience hypotheses (10 min). “If X fails, Y should happen.” Be specific about both the failure and the expected response.

- Run a latency injection in staging (15 min). Add 500ms of latency to one service and observe how the application behaves. Compare against your hypothesis.

- Run ContextQA tests during the chaos experiment (15 min). Use web automation to verify user-visible behavior while infrastructure is degraded.

- Document findings (10 min). What matched your hypothesis? What surprised you? What needs to be fixed?

- Start a ContextQA pilot (15 min). Benchmark resilience testing alongside functional and performance testing over 12 weeks.

Conclusion

Chaos engineering tests the question that functional and performance tests cannot answer: what happens when things go wrong? By deliberately injecting controlled failures, QA teams discover broken fallbacks, missing health checks, and silent dependencies before production users discover them.

The approach is proven at Netflix scale and validated by the Gartner Peer Insights market for chaos engineering tools. For QA teams, chaos experiments complement functional testing through ContextQA’s web automation, performance testing, and root cause analysis.

Book a demo to see how ContextQA validates both functionality and resilience for your application.