Table of Contents

TL;DR: Agentic AI in software testing refers to autonomous AI systems that can plan, execute, and adapt testing workflows with minimal human direction. Unlike traditional AI that generates test scripts on command, agentic AI makes its own decisions about what to test, when to test it, and how to respond when something breaks. Gartner predicts 40% of enterprise applications will feature task specific AI agents by end of 2026, up from less than 5% in 2025. For QA teams, this is not a future trend. It is happening right now, and the teams that understand the difference between AI assisted testing and agentic testing will be the ones that ship faster without breaking things.

Definition: Agentic AI in Software Testing

Autonomous AI systems that independently plan, execute, and adapt software testing workflows based on goals rather than scripts. Unlike traditional automation (which follows predefined steps) or AI assisted testing (which generates scripts on command), agentic AI agents perceive application changes, decide what testing actions to take, execute those actions, analyze results, and iterate, all with minimal human intervention. Forrester formally recognized this shift by renaming its testing category from “Continuous Automation Testing Platforms” to “Autonomous Testing Platforms” in Q3 2025.

Quick Answers:

What is agentic AI in testing?

Agentic AI uses autonomous agents that independently plan what to test, execute tests, analyze failures, and adapt their strategy based on results. The agent operates on goals (“verify checkout works after this code change”) rather than scripts (“click button X, then verify element Y”). It can read requirements, generate test cases, run them, diagnose failures, and even file bug reports without human intervention at each step.

How is agentic testing different from AI assisted testing?

AI assisted testing helps humans write tests faster (autocomplete, code generation, script suggestions). Agentic testing replaces the human decision loop entirely for routine testing tasks. The human defines the goal and reviews the output. The agent handles everything in between.

Is agentic AI replacing QA engineers?

No. The Stack Overflow 2025 Developer Survey shows 70% of developers do not see AI as a threat to their jobs. Agentic AI handles repetitive pattern matching (regression, smoke tests, visual comparison). Human testers focus on strategy, exploratory testing, and edge case discovery that requires domain knowledge and creative thinking.

Why Agentic AI Testing Matters Right Now

I want to put some numbers on the table before we get into the details.

The Gartner prediction is striking: 40% of enterprise applications with AI agents by end of 2026, up from under 5% in 2025. That is an 8x increase in a single year. But Gartner also warns that over 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs and unclear value. Both numbers are true simultaneously. The technology works. The implementation is where teams fail.

McKinsey’s State of AI 2025 survey (1,993 participants across 105 countries) found that 23% of enterprises are already scaling agentic AI in at least one business function, with another 39% actively experimenting. But only about 6% qualify as “AI high performers” seeing measurable bottom line impact.

The Stack Overflow 2025 Developer Survey (49,000+ responses) adds the developer perspective: 84% of developers use or plan to use AI tools. But only 31% currently use AI agents, and 38% have no plans to adopt them. Among those who do use agents, 69% report increased productivity, yet 87% remain concerned about accuracy.

That last number is the one I keep coming back to. 87% concerned about accuracy. And that is exactly why agentic AI in testing matters more than agentic AI anywhere else. Testing is the one place where accuracy concerns are not a blocker, they are the entire point. An agentic testing system that finds a bug nobody asked it to look for is doing its job. An agentic coding system that introduces a bug nobody asked for is not.

ContextQA’s AI testing suite and CodiTOS (Code to Test in Seconds) were built on this principle. The AI agent watches your code changes, decides which tests to create, generates them, runs them, and reports what it found. You define the quality goal. The agent figures out how to verify it.

The Four Levels of AI in Testing

Not every “AI powered” testing tool is agentic. Here is how to tell the difference.

| Level | What It Does | Human Role | Example |

| Level 0: Traditional Automation | Executes predefined scripts in fixed sequence | Human writes every test, maintains every selector, investigates every failure | Record and playback tools, basic scripting frameworks |

| Level 1: AI Assisted | AI helps humans write tests faster (autocomplete, code generation, suggestions) | Human makes all decisions. AI accelerates execution of those decisions | AI code completion in IDE, ChatGPT generating test scripts |

| Level 2: AI Augmented | AI handles specific subtasks autonomously (self healing selectors, failure classification, test prioritization) | Human sets strategy and reviews AI decisions. AI handles routine subtasks | ContextQA AI based self healing, root cause analysis |

| Level 3: Agentic | AI autonomously plans, executes, and adapts testing workflows based on goals | Human defines quality goals and reviews outcomes. Agent handles the entire testing loop | ContextQA CodiTOS generating tests from code changes, autonomous regression orchestration |

Most teams in 2026 operate between Level 1 and Level 2. The opportunity is moving to Level 3 for the 80% of testing that is repetitive and pattern based, while keeping humans at Level 2 for the 20% that requires judgment.

Forrester recognized this shift by renaming its entire testing category from “Continuous Automation Testing Platforms” to “Autonomous Testing Platforms” in Q3 2025. They profiled 31 vendors in the landscape and evaluated 15 in the Q4 2025 Wave. Their key finding: the industry has plateaued at roughly 25% automated test coverage, and agentic AI is the expected mechanism to break through that ceiling.

How Agentic AI Testing Actually Works

I want to be specific here because too many articles describe agentic AI in vague terms like “intelligent” and “autonomous” without explaining the actual mechanism.

An agentic testing system operates through a continuous loop of four phases.

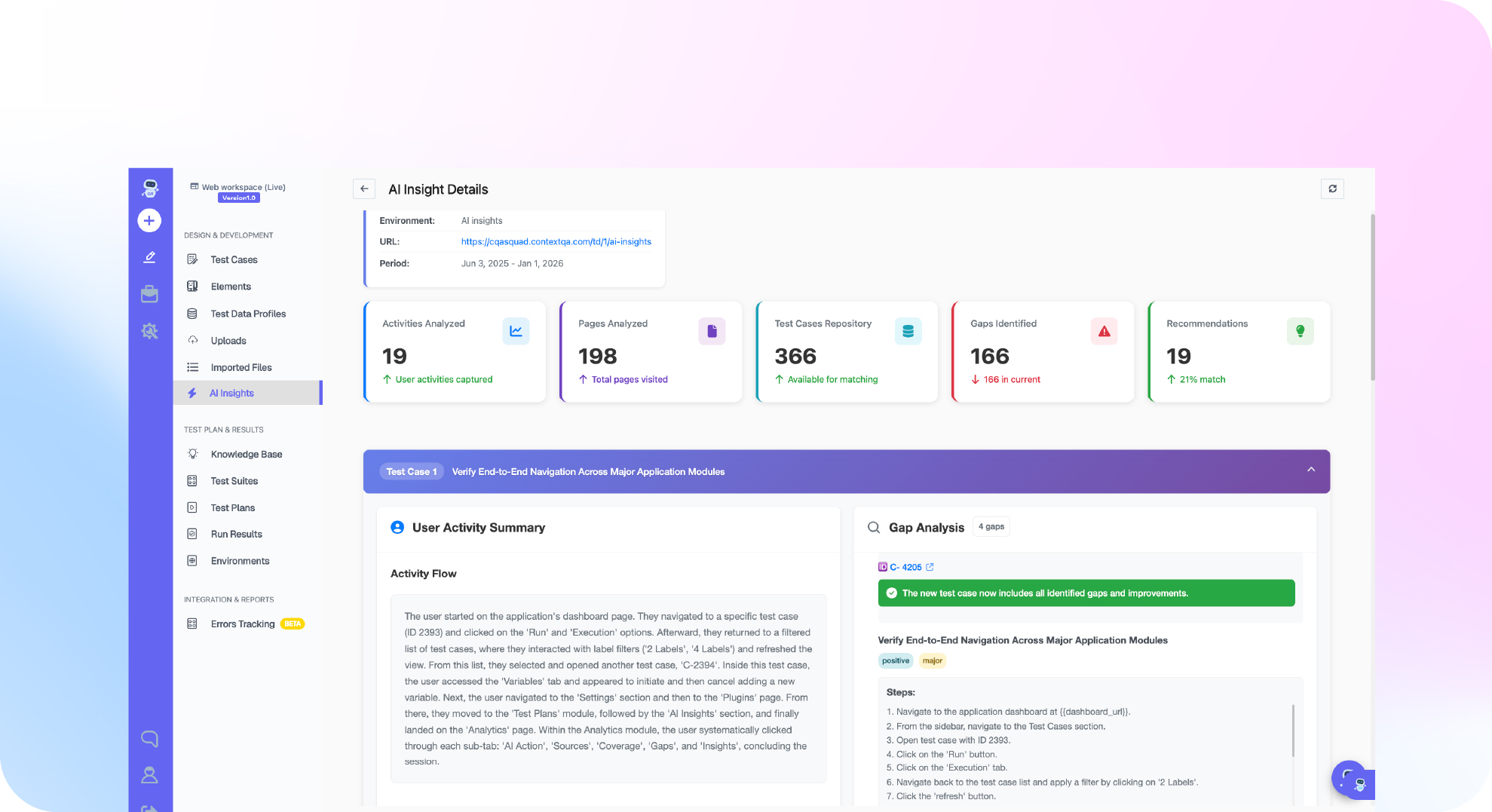

Phase 1: Perceive. The agent monitors signals from your development workflow. Code commits. Pull requests. Jira tickets. Deployment events. Application logs. It understands what changed and what areas of the application are affected. ContextQA’s AI insights and analytics provides this perception layer, tracking which code modules map to which test coverage.

Phase 2: Plan. Based on what changed, the agent decides what to test. If a developer modified the checkout payment processing, the agent identifies the checkout flow tests, related API contract validations, visual regression checks on the payment page, and performance benchmarks for the payment endpoint. It prioritizes by risk: high traffic flows first, edge cases second.

Phase 3: Execute. The agent runs the selected tests across the relevant layers. Web automation for browser flows. API testing for service contracts. Visual regression for UI appearance. Mobile automation for cross device validation. If a test fails because a UI element changed (not a bug, just a CSS refactor), the AI based self healing engine updates the selector automatically and continues.

Phase 4: Analyze and Adapt. The agent classifies each failure. Real bug? Test maintenance issue? Environment problem? Transient flake? ContextQA’s root cause analysis traces failures through visual, DOM, network, and code layers to provide this classification. The agent then adapts: it updates its test selection model based on what it learned, so the next cycle is smarter than the last.

This four phase loop runs on every commit, every merge, every deployment. No human has to decide “which tests should I run?” or “is this failure real?” for the routine 80% of scenarios. The QA team focuses on exploratory testing, test strategy, and the complex edge cases that require domain expertise.

What Meta’s Research Tells Us About Where This Is Headed

In February 2026, Meta Engineering published research on Just in Time Tests (JiTTests). This represents the leading edge of agentic testing at massive scale.

The core idea: instead of maintaining a permanent test suite, AI generates fresh tests for every code change based on the specific lines modified. The tests exist only for that change. They require no maintenance. They require no test code review. They target exactly the code paths affected by the diff.

This is a radical departure from traditional testing where you build and maintain a growing suite of permanent tests. Meta’s approach treats tests as disposable artifacts produced by the agent for each change, verified, and discarded.

I do not think every organization should adopt JiTTests today. The technique requires extremely mature CI/CD infrastructure and significant AI investment. But it shows the direction: agentic systems that treat test creation as a continuous, automated process rather than a one time human activity.

ContextQA’s approach sits between traditional suites and JiTTests. The platform maintains persistent test coverage (your regression suite, your smoke tests) but uses agentic AI to supplement that coverage with dynamically generated tests for each code change through CodiTOS. The persistent tests provide baseline confidence. The dynamic tests provide change specific validation.

The Trust Problem and How to Solve It

Here is the honest part. The GitLab 2025 DevSecOps Report found that only 37% of developers would trust AI output without human review. The same report found teams lose approximately 7 hours per week per developer to AI related inefficiencies, including reviewing, correcting, and re running AI generated code.

This trust deficit is real and legitimate. But in testing, it plays out differently than in coding.

When an AI agent writes production code that is incorrect, the code ships a bug to users. When an AI agent writes a test that is incorrect, the test fails, and a human reviews the failure. The failure mode of AI generated tests is a false alarm, not a production defect. False alarms waste time, but they do not break your product. That asymmetry makes testing the safest domain for agentic AI adoption.

The practical trust building approach:

Week 1 to 2: Run agentic tests in shadow mode alongside your existing suite. Compare results. Build confidence that the agent’s decisions align with what your team would have decided manually.

Week 3 to 4: Let the agent generate tests for low risk areas (unit tests, visual regression checks, smoke tests). Humans review the generated tests before they become quality gates.

Week 5 to 8: Expand to medium risk areas (API contract validation, cross browser testing). The agent generates and executes. Humans review failures, not the tests themselves.

Week 9 to 12: Full agentic operation for routine testing. The agent owns the entire loop for the 80% that is pattern based. Humans own strategy, exploratory testing, and edge cases.

This 12 week rollout maps directly to ContextQA’s pilot program, which benchmarks the platform’s impact over 12 weeks with measurable before and after metrics.

Original Proof: ContextQA’s Agentic Testing in Production

ContextQA was built as an agentic AI testing platform from day one, not a traditional automation tool with AI bolted on.

The IBM ContextQA case study documents 5,000 test cases migrated using IBM’s watsonx.ai NLP, with test flakiness eliminated through AI powered element identification. This is not AI assisting a human migration. This is AI performing the migration autonomously, reading existing tests, understanding their intent, and recreating them in a new format.

G2 verified reviews show the outcomes that matter: 50% reduction in regression testing time. 80% automation rates (up from 30 to 40% baselines). 150+ backlog test cases cleared in the first week. These results come from the agentic approach: the AI does not wait for humans to tell it what to test next. It identifies gaps, generates coverage, and executes continuously.

Deep Barot, CEO and Founder of ContextQA, described the platform philosophy in a DevOps.com interview: AI should run 80% of common tests, running the right test at the right time. That “right test at right time” formulation is the essence of agentic testing. The agent decides what is right based on context, not a predefined script.

The platform covers the full agentic testing stack: perception (monitoring code changes and CI events), planning (intelligent test selection through risk based testing), execution (web, mobile, API, visual, performance, security, database, Salesforce, ERP/SAP testing), and analysis (AI insights with root cause classification). The IBM Build partnership and G2 High Performer recognition validate this at enterprise scale.

Limitations and When Agentic AI Falls Short

I would not be credible if I only talked about what works.

Agentic AI cannot do exploratory testing. Discovering bugs nobody thought to look for requires human creativity, domain knowledge, and the kind of lateral thinking that AI does not have. An agent can verify that a payment flow processes correctly. It cannot wonder “what happens if a user submits the same payment twice in 500ms while on a spotty mobile connection?” That question comes from human experience.

The 40% cancellation prediction is real. Gartner’s warning that over 40% of agentic AI projects will be canceled by 2027 should be taken seriously. The most common failure mode is deploying agents without clear success metrics, adequate data, or appropriate governance. Start with a specific, measurable goal (reduce regression time by 40%, achieve 80% automation rate) and expand only after hitting it.

AI generated tests can be wrong. An agent that generates a test from requirements may misinterpret the requirement. An agent that generates tests from code may test the implementation rather than the specification. Human review of agent output is essential during the first months and remains important for complex business logic going forward.

Not every team is ready. If your team does not have CI/CD pipelines, version control, or basic test infrastructure, agentic AI will not help. You need the foundation before you add intelligence on top. ContextQA’s all integrations connect to Jenkins, GitHub Actions, GitLab CI, CircleCI, Jira, and other tools that form this foundation.

Do This Now Checklist

- Assess your current automation coverage (10 min). What percentage of your test cases are automated? If under 25%, you are at the industry plateau that Forrester identifies. Agentic AI can break through it.

- Identify your top 10 repetitive testing tasks (15 min). Regression runs, smoke tests, visual checks, API validations. These are the first candidates for agentic automation.

- Measure your test maintenance burden (10 min). How many hours per sprint does your team spend fixing broken tests that fail due to UI changes rather than real bugs? Self healing through AI based self healing eliminates most of this.

- Calculate your feedback loop time (5 min). Minutes from code push to test results. If over 15 minutes, intelligent test selection will bring this under 10.

- Run the ROI calculator (5 min). Model your projected savings from agentic testing against your current team metrics.

- Start a ContextQA pilot (15 min). 12 weeks to benchmark agentic AI testing against your current approach with measurable data.

Conclusion

Agentic AI testing is the biggest shift in QA since the move from manual to scripted automation. Gartner, Forrester, and McKinsey all independently confirm that the market is moving from AI assisted to AI autonomous testing. The organizations that adopt it will break through the 25% automation plateau that has limited the industry for years.

ContextQA’s AI testing suite, CodiTOS, self healing, and root cause analysis deliver the full agentic testing loop: perceive changes, plan test strategy, execute across all platforms, and analyze results with intelligent classification.

Book a demo to see agentic AI testing running on your application.